Explore Aratako’s latest MioTTS project, a series of ultra-lightweight TTS models based on LLM architecture. From the extreme 0.1B version to high-quality 2.6B models, MioTTS combines the custom neural audio encoder MioCodec to achieve incredible inference speed while maintaining high-fidelity audio. This article analyzes its technical characteristics, model family, and how to easily deploy it using existing LLM tools.

In the field of Artificial Intelligence Text-to-Speech (TTS), developers often face a difficult choice: pursuing extreme realism usually means massive models and expensive computational costs; if speed and lightweight design are prioritized, the resulting voice often sounds mechanical and lacks soul. However, the latest MioTTS project released by open-source developer Aratako seems to have found a new way to break this deadlock.

This is not just another voice model, but a solution optimized for “lightweight” and “real-time inference.” Imagine compressing voice generation technology, which originally required high-end graphics cards, to fit into a single-board computer or even an old smartphone, while maintaining impressive naturalness. MioTTS was born to realize this vision.

Disrupting Traditional Architecture: When Voice Generation Meets LLM

The core innovation of MioTTS lies in its choice of underlying architecture. Unlike traditional TTS that relies on specific Generative Adversarial Networks (GANs) or Diffusion models, MioTTS is a standard “LLM-based” system.

What does this mean? Simply put, MioTTS treats voice generation as a “language prediction” task. It converts audio into discrete tokens, and just as ChatGPT predicts the next word, MioTTS predicts the next audio segment. This design brings a huge compatibility advantage: theoretically, any tool capable of running Large Language Models can run MioTTS.

The adoption of this architecture directly solves the deployment problems that cause the most headaches for developers. There’s no need to set up a complex Python environment specifically for TTS. Through optimized LLM inference engines, voice generation can enjoy the same level of acceleration and optimization as text generation.

The Core of Hearing: The Custom MioCodec Neural Encoder

To make the model small while keeping the sound pleasant, the key is “compression.” If compressed too much, the sound will be distorted; if not compressed enough, the model will process it slowly.

To achieve the perfect balance between the two, the developer did not use common encoders available on the market but specially developed MioCodec for this project. This is a custom neural audio encoder with a very clear design goal: to reduce latency.

While maintaining a high sampling rate of 44.1kHz, MioCodec controls the frame rate at 25Hz. For technical personnel, this is very exciting data. A lower frame rate means the number of tokens the model needs to generate is significantly reduced, which in turn significantly improves generation speed (i.e., reduces the Token Rate). This is why even the smallest 0.1B model can produce clear, bright sound without any blurriness. Furthermore, the encoder itself is open-sourced under the MIT license, demonstrating the developer’s contribution to the open-source community.

Zero-Shot Voice Cloning: “Mimicry” in Just 20 Seconds

In the past, mimicking a specific person’s voice often required hours of recording data for fine-tuning. MioTTS leverages the powerful in-context learning capabilities of modern LLMs to achieve “Zero-Shot Voice Cloning.”

Users only need to provide a reference audio clip of about 20 seconds, and the model can analyze the timbre, tone, and speaking style within it and apply them to new text generation. This feature is extremely attractive to independent game developers and content creators as it significantly lowers the barrier to voice acting for characters.

Currently, MioTTS has been trained on approximately 100,000 hours of voice data and natively supports bilingual English and Japanese. This is a huge plus for developers who love anime culture or need international applications. The developer also mentioned that while development is primarily in Japanese, they look forward to specific feedback from the community on English prosody performance.

The Model Family Tree: From “Extreme Lightweight” to “Performance Monster”

MioTTS is not a single-size product but a complete model family. The developer has released multiple versions with different parameter sizes based on different base models, allowing users to choose flexibly based on their hardware conditions. You can view the full list through the HuggingFace Collection.

Below is a detailed comparison of each version and an analysis of their application scenarios:

- 0.1B (Falcon-H1-Tiny): The smallest member of the family. The 0.1B parameter size is incredibly small and can run smoothly on almost any edge computing device (such as a Raspberry Pi). Its Real-Time Factor (RTF) is as low as 0.04, meaning it only takes 0.04 seconds of computation to generate 1 second of speech.

- 0.4B (LFM2-350M): Built on LFM Open v1.0, suitable for scenarios that require slightly better sound quality but where hardware resources are still constrained.

- 0.6B (Qwen3-0.6B): Using the Apache 2.0 license, this is the most business-friendly lightweight choice.

- 1.2B (LFM2.5-1.2B): The balance point between performance and speed, suitable for most consumer computers.

- 1.7B (Qwen3-1.7B): A further increase in parameter size, capable of capturing more delicate emotional changes, also benefiting from the permissive Apache 2.0 license.

- 2.6B (LFM2-2.6B): The flagship of the current family. Although it has the most parameters, it is still very light compared to mainstream 7B/8B language models. It provides the highest sound quality fidelity, suitable for projects with strict requirements for audio quality.

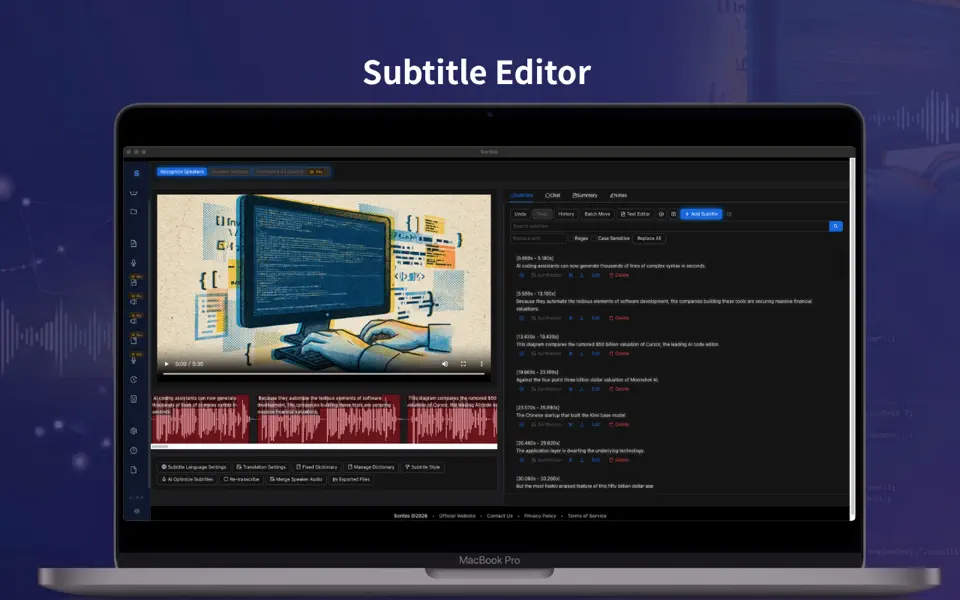

Practical Deployment: Since it’s an LLM, Run it like an LLM

This is perhaps the most charming part of MioTTS. Because its architecture is compatible with LLMs, you don’t need to struggle with complex PyTorch dependency libraries. If you have tools like llama.cpp or Ollama installed on your computer, you’ve already completed half of the deployment work.

In fact, the Inference Code provided by the developer demonstrates a minimalist deployment process. Users can load the MioTTS model into a local Ollama service and then send text and reference audio through standard API interfaces. The system will return a Base64 encoded WAV file.

This design greatly reduces integration difficulty. Imagine being able to run your Chatbot and voice synthesis service simultaneously in a Docker container, both sharing the same inference backend. This is a significant saving in system resources. For users who want a sneak peek, the official also provides an online Demo of the 0.1B version for direct testing.

Frequently Asked Questions (FAQ)

To help you get started with MioTTS faster, we have compiled a few of the most common questions from the community about this project:

Q1: Can these models be used for free in commercial projects? It depends on the specific model version you choose. Different sizes of MioTTS are based on different base models, so the license terms vary:

- 0.6B and 1.7B versions are based on Qwen and use the Apache 2.0 license, which is the most permissive open-source agreement and fully allows commercial use.

- 0.4B, 1.2B, and 2.6B versions are based on LFM and follow the LFM Open License v1.0.

- 0.1B version is based on Falcon and follows the Falcon-LLM License. Before use, please be sure to confirm the specific license terms of your chosen model to avoid legal disputes.

Q2: Can I run it if I only have a CPU? Absolutely, and the experience might be better than you imagine. Thanks to the support for GGUF quantization technology and the lightweight design of the model itself, the 0.1B and 0.4B versions can achieve nearly real-time generation on modern CPUs. Even for larger models running through system memory (RAM), the generation speed is completely acceptable for non-real-time applications.

Q3: Besides English and Japanese, does it support Chinese? Currently, the officially released models are only specifically trained on English and Japanese for about 100,000 hours. While you can try to input Chinese, the model might have inaccurate pronunciation or a strange accent. however, since MioTTS uses a standard LLM architecture, it is very likely that the open-source community will add Chinese support through fine-tuning in the future.

Q4: What is the “Best-of-N” feature? Should I turn it on? Autoregressive models can sometimes have pronunciation errors or word repetitions. MioTTS’s built-in “Best-of-N” mechanism generates N candidate audios (e.g., 4) at once and then uses a speech recognition model (ASR) to score them, picking the one that best matches the text.

- When to turn it on: When you are making video dubbing or have pre-recording needs where accuracy is more important than speed.

- When to turn it off: When you are in a real-time voice chat and need the lowest latency.

Q5: Why does my voice sound a bit mechanical? This is usually related to the quality of the “reference audio.” Although it is zero-shot cloning, the clearer and less noisy the input reference audio, the better the model captures the features. Additionally, it’s recommended to use real human recordings as references and avoid using audio generated by other TTS for “double cloning,” which can lead to cumulative digital distortion.